What data do you risk losing if you don't adopt a Student Model within the Learning analytics?

Digital training invests heavily in the quality of training experiences to increase not only the ability to skills digital tools, but also and above all to improve theexperience harmonizing it with people's real needs.

On the one hand, we currently see the growth of destructured training models (Micro-learning, social learning, informal learning) which tend to dilute training in a perspective just-in-time adapting it to the need, exploiting the potential of Digital to bring knowledge to the person when the need arises; on the other hand, we are witnessing the growth of models of Gamification that we reward the "metaphorization" of the learning object in an "exemplary" game mechanic, which concentrates cognitive effort only on the fundamental tasks.

In this scenario the third element of innovation is given by the search for adaptivity of training, which must be offered to the individual respecting their training needs and the time available.

In this scenario the third element of innovation is given by the search for adaptivity of training, which must be offered to the individual respecting their training needs and the time available.

All these lines of innovation share a trend towardstraining individualization, which focuses on the “user"Intended as an" active "subject of one's training experience (almost in contrast with the literal definition of user, that is, user of a standard product).

If this is critical to the sense making andengagement of training, which constitute essential levers of the training path and professional growth, on the other hand risks losing focus on the overall training results to be guaranteed to the client organization.

In fact, if the training model specializes in the individual, there remains the need for Training and HR to have a global picture of skills and preparation levels.

Therefore, in this pursuit of individual engagement, it is always necessary to guarantee the client organization an adequate level of control over training in general. Hence the need for complex Learning Analytics systems.

So blur or focus on the User?

It remains fundamental to observe and interpret the data in order to understand the significance of the training experience, both globally and at the individual level.

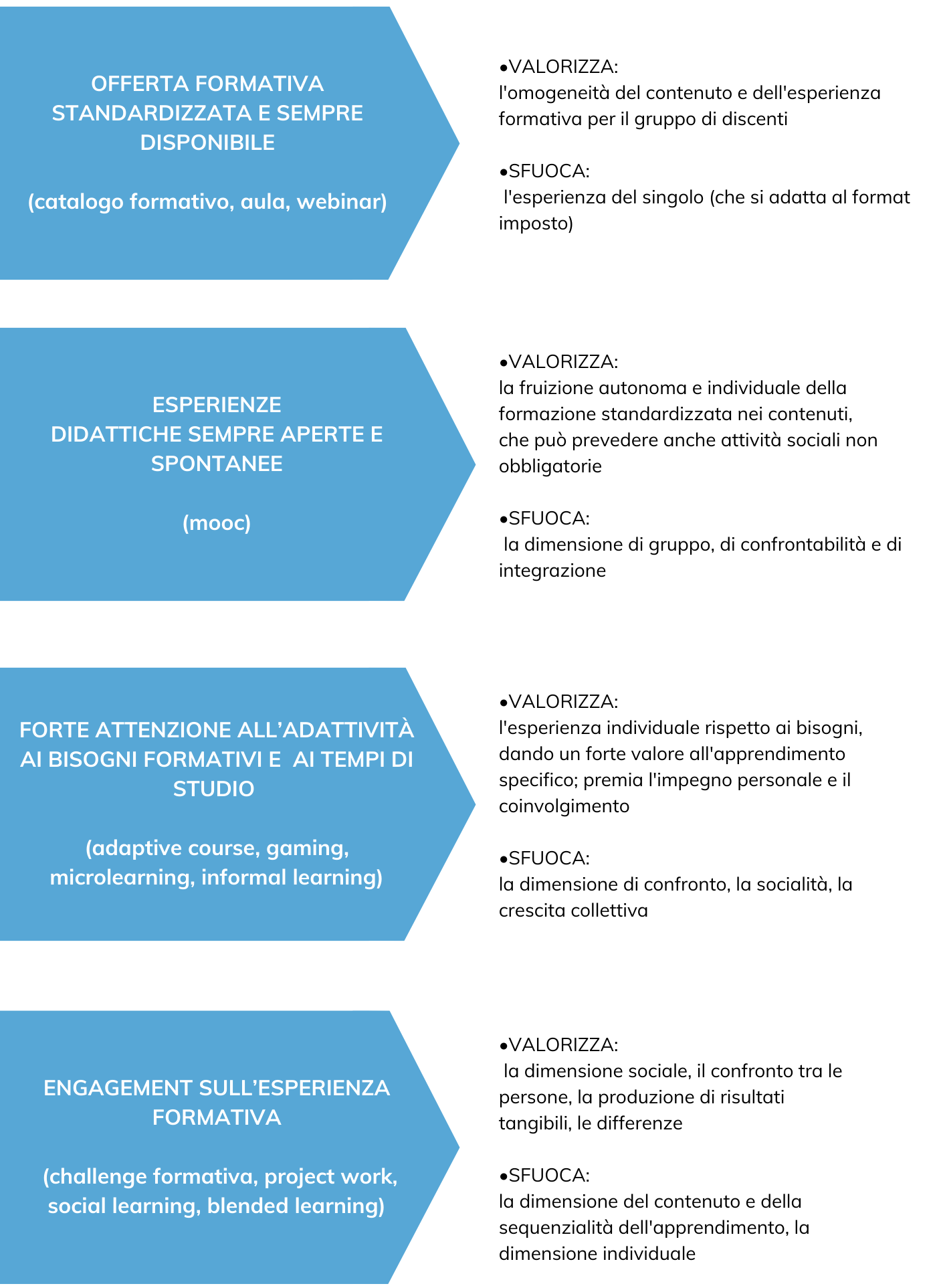

But what do the different experiences offer us as "valuable" information? Which didactic models are valued in an individual vs. group approach, and which ones remain out of focus?

Considering these differences, what data does it make sense to examine in teaching?

The point of view from which we look at the data always carries implicit questions.

- If we look at ai individual data, we observe them differently from the expected behavior for that course (what the user did different from the group? Positive and / or negative?),

- If we look at ai group data, we observe them investigating the average results and the deviations from these, with the aim of isolating the critical cases that deserve an intervention (what are the average behaviors of the group? Are they adequate or not? Who is out of the norm?).

In this type approach inquiryhowever, we risk losing the overall elements that would help us to better understand the didactic scenario.

What is the essential information at the individual and group level? What data are we willing to blur to get more detail on the data that interests us?

To maintain an overall view that takes into account both individual and group data, we must necessarily make the analysis perspective more complex.

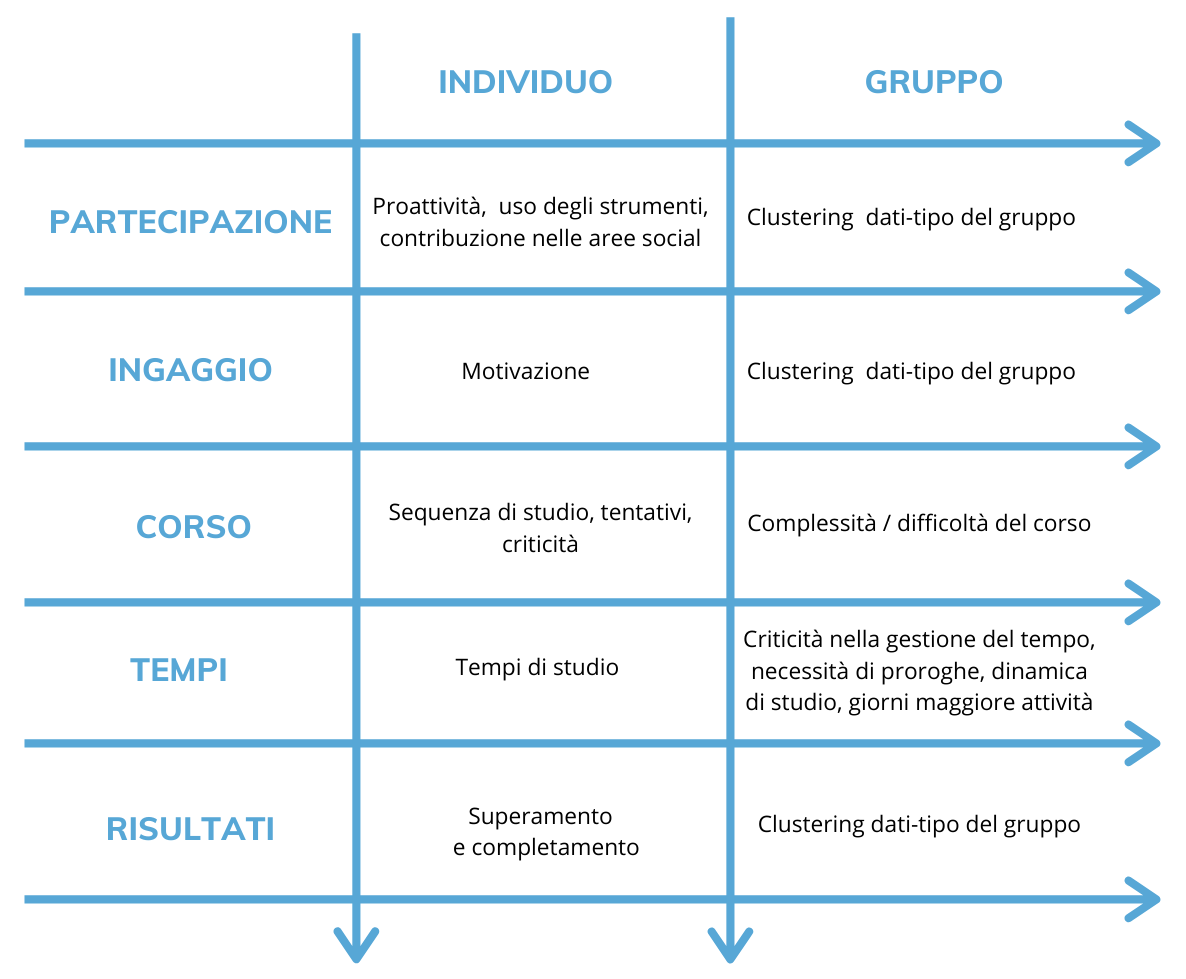

Taking an approach of data science we can combine the data in LMS to get to one Student Model able to measure the teaching experience in its complexity and without losing any information.

In essence, the data traced must be read from a grid that allows to investigate not the single result, but a series of behaviors expressed as an index of behavior of the person being trained.

Through one pattern analysis in fact, it is possible to synthesize, and therefore describe, the training experience, using indicators that can not only represent the individual's experience but also measure it in relation to the behavior of its reference group, so as not to disperse in the analysis the fundamental need for the comparability of the measures.

Through one pattern analysis in fact, it is possible to synthesize, and therefore describe, the training experience, using indicators that can not only represent the individual's experience but also measure it in relation to the behavior of its reference group, so as not to disperse in the analysis the fundamental need for the comparability of the measures.

No information is in itself good or wrong. For example, a meter is a lot or a little only if we compare it to a centimeter or a kilometer. Each data must be compared with a benchmark to be assessable.

This aspect of the comparability it is fundamental, because it carries the need for data normalization.

Adopting an effective measurement model allows you to look at all the training activities with the same lenses, and have specific and functional information for a didactic intervention strategy.

I Learning Analytics therefore they become truly useful tools for training and HR only if they know how to adopt one student model, ie a complex model of representation of the training experience able to:

- describe the individual's experience across all types of courses,

- adopt KPIs derived from data (data-driven) and not simply classified ex ante by experts,

- include the typical target group behavior as a benchmark,

- be transversal to didactic models.

Based on the derived data, ex post it is then possible to reclassify the behaviors into differential intervention patterns, in order to adopt the best strategies related to the different cases, not pre-established but directly derived from reading the descriptive data of the learning experience.

Daniela Pellegrinic

Expert in educational design and R&D